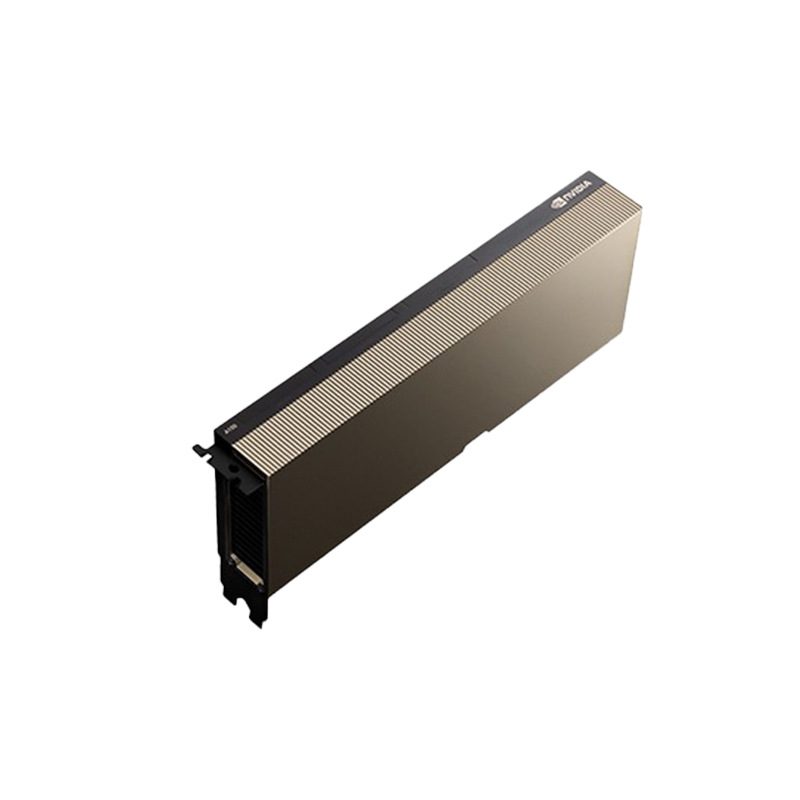

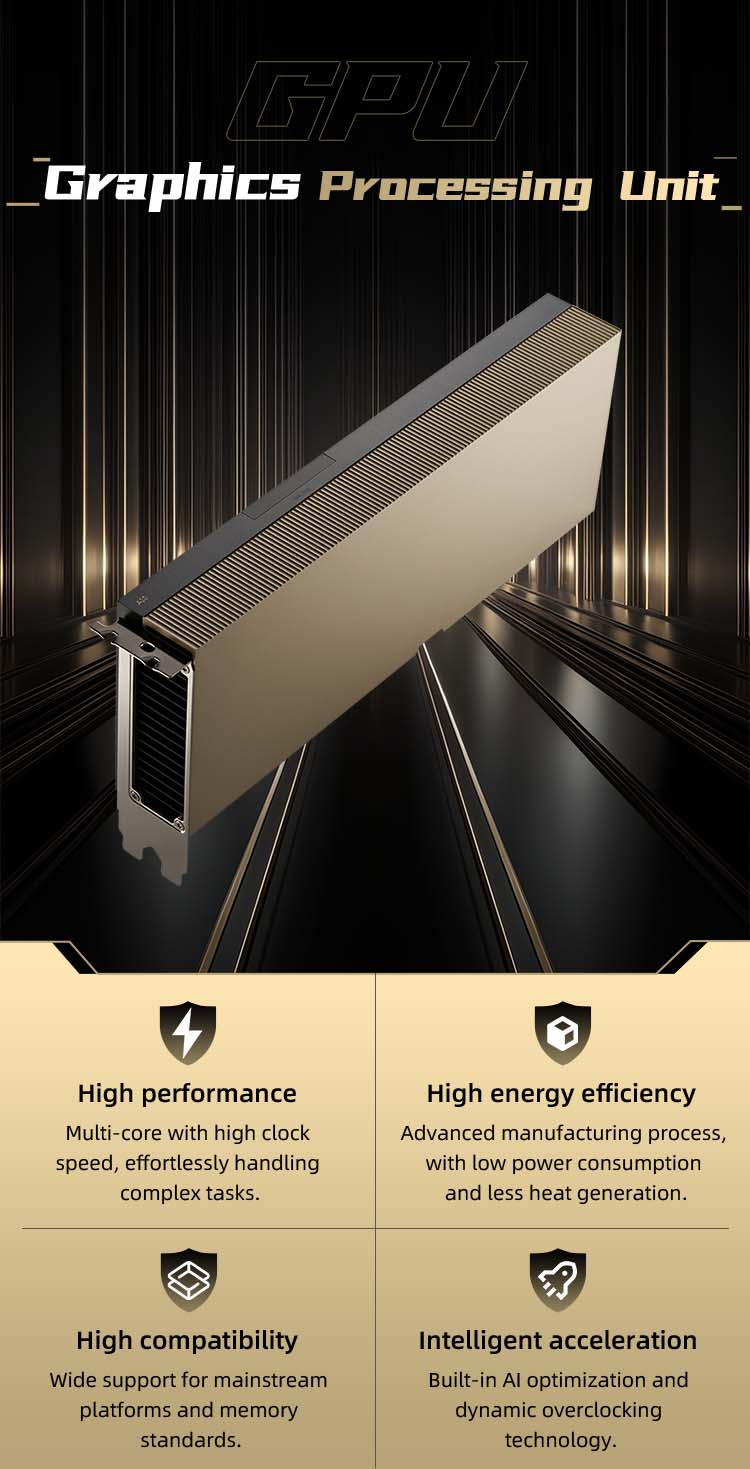

The NVIDIA H200 Tensor Core GPU is the latest-generation high-performance computing and

generative AI accelerator based on the Hopper architecture, purpose-built to handle complex

AI inference and high-performance computing (HPC) workloads.

Brand | NVIDIA | model | H200 141GB SXM |

Item Number | H200 141GB SXM | Product dimensions | 268mmX111mm double slot |

Chip manufacturer | NVIDIA | Chip model | H200 141GB SXM |

interface | PCIe | Memory capacity | 141GB |

Core position width | 5120bit | graphics card slot | PCIe 5.0 x16 |

Video Memory Type | HBM2e/3 | Memory interface | 6144-bit |

core frequency | 1000MHz(MHz) | Memory Clock | 1593MHz(MHz) |

Stream processor unit | 14592(个) | 3D API | DirectX 12 |

Memory & Bandwidth

The H200 is the first GPU equipped with HBM3e memory, featuring 141GB of memory capacity and a memory bandwidth of up to 4.8 terabytes per second (TB/s), representing a 1.4x improvement over the H100’s 3.35 TB/s. This larger memory capacity and faster bandwidth significantly accelerate data processing for generative AI and high-performance computing workloads.

High-Performance Computing (HPC) Acceleration

The H200 excels in scientific computing and simulation tasks, delivering up to 110x performance gains over traditional CPUs, driven by higher memory bandwidth and an optimized compute architecture. This gives it a distinct advantage in memory-intensive applications such as scientific research and simulations.

Energy Efficiency & Cost Optimization

While delivering exceptional performance, the H200 maintains the same power envelope as the H100 (up to 700W), with higher energy efficiency and a lower total cost of ownership (TCO). This makes it not only faster but also more eco-friendly in AI factories and supercomputing systems.

Use Cases

The H200 is suitable for a wide range of scenarios including generative AI, scientific computing, and enterprise AI inference. It also supports NVIDIA AI Enterprise software, streamlining AI development and deployment workflows.

Technical Support

Technical Support Service & Support

Service & Support Company Introduction

Company Introduction Company News

Company News Contact Us

Contact Us Join Us

Join Us